ErUM-Data DEEP

DEEP FAQ

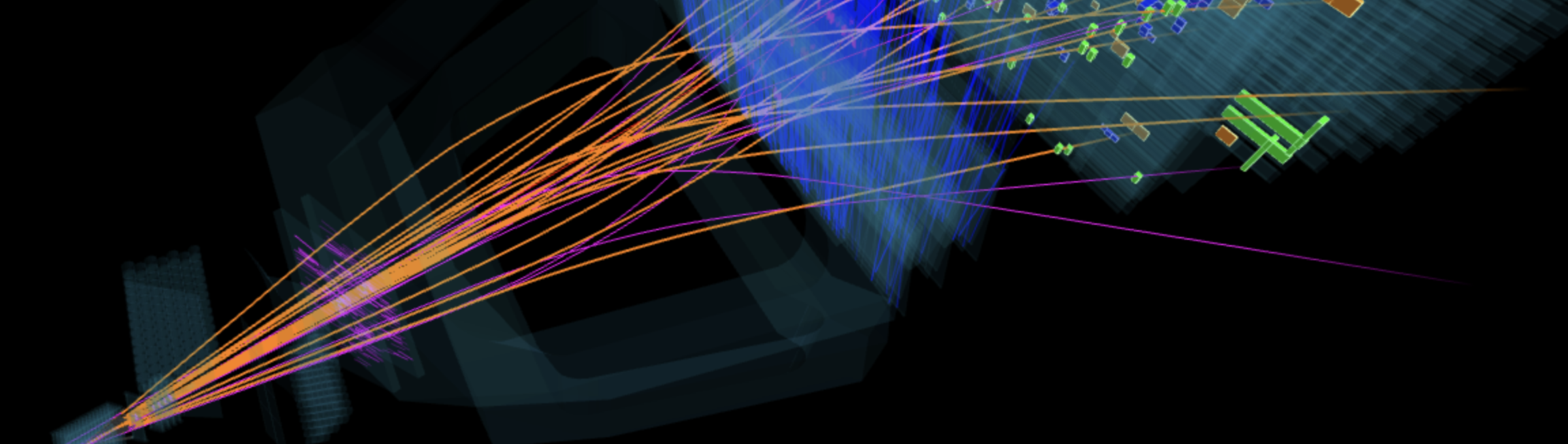

© LHCb / CERN

© LHCb / CERN

Computing Hardware

Modern data processing increasingly relies on heterogeneous hardware architectures. The DEEP project targets platforms including CPUs, GPUs, FPGAs, AI Engine arrays in AMD Versal devices, and machine learning accelerators such as Sima.ai Modalix.

Central Processing Unit (CPU)

Central Processing Unit (CPU)

A CPU is a general purpose processor that executes instructions from a program. It is optimized for flexibility and control tasks and can run many different types of software. CPUs typically execute a limited number of operations in parallel.

Graphics Processing Unit (GPU)

Graphics Processing Unit (GPU)

A GPU is a processor designed for highly parallel numerical computations. It contains many identical computing cores that perform the same simple operations on large data sets simultaneously. GPUs are widely used for scientific computing and machine learning.

Field Programmable Gate Array (FPGA)

Field Programmable Gate Array (FPGA)

An FPGA is a programmable integrated circuit that allows digital hardware circuits to be defined after manufacturing. Algorithms are implemented as application-specific digital circuits that operate in parallel. This enables very low latency data processing and high throughput. Direct access to high speed communication interfaces makes FPGAs well suited for real time processing in scientific instruments.

Coarse Grained Reconfigurable Array (CGRA)

Coarse Grained Reconfigurable Array (CGRA)

A CGRA is a processor architecture consisting of a grid of programmable arithmetic units connected by an interconnect network. Each unit performs operations such as addition, multiplication, or vector processing while data streams through the array. Unlike FPGAs, which configure individual logic gates, CGRAs configure connections between higher level compute units. This allows efficient execution of highly parallel algorithms with lower programming complexity and higher energy efficiency than custom FPGA logic.

AI Engine (AIE)

AI Engines are Very Long Instruction Word (VLIW) processors arranged as a programmable array and integrated into AMD Versal Adaptive SoCs. The array forms a CGRA architecture optimized for computationally intensive workloads such as signal processing and machine learning. Each AI Engine tile contains a vector processor, local memory, and high bandwidth connections to neighboring tiles. The AI Engine array operates alongside CPUs and FPGA logic within the same device, enabling heterogeneous real time data processing.

Application Specific Integrated Circuit (ASIC)

An ASIC is a custom integrated circuit designed for a specific task or algorithm. Unlike programmable processors, its functionality is fixed during manufacturing. ASICs can therefore achieve very high performance and energy efficiency for well defined workloads, but they cannot be reprogrammed after fabrication.

System on Chip (SoC)

A SoC integrates multiple system components on a single semiconductor chip. These components typically include processor cores, memory interfaces, accelerators, and communication interfaces. SoCs are widely used in embedded systems because they combine computing, data processing, and input/output functionality within one device.

TPU (Tensor Processing Unit)

A TPU is a machine learning accelerator developed by Google. It is designed to accelerate tensor operations used in neural networks, in particular large matrix multiplications. TPUs are primarily deployed in Google data centers for training and inference of machine learning models.

Versal (AMD Versal Adaptive SoC)

Versal devices are heterogeneous system on chip platforms developed by AMD. They integrate several computing architectures on a single chip, including CPUs, programmable FPGA logic, and arrays of AI Engine (AIE) processors. This allows different parts of an algorithm to run on the most suitable hardware while maintaining low latency and high data throughput.

Modalix (Sima.ai MLSoC)

Modalix is an embedded machine learning accelerator platform developed by Sima.ai. It integrates CPU cores, a dedicated machine learning accelerator, memory, and communication interfaces on a single chip. The platform is designed for efficient execution of neural network inference in embedded and edge computing systems.

Neural Processing Unit (NPU)

Neural Processing Unit (NPU)

A NPU is a machine learning accelerator designed specifically to execute neural network computations efficiently. These processors contain hardware optimized for operations such as matrix multiplications. Examples include data center accelerators such as Google TPUs and neural processing units integrated in modern processors and system on chip architectures such as Apple M-series processors, Copilot+ PCs, or AI-focused SoC platforms such as Sima.ai Modalix.